The Fortress of Belief: Why We Cling to Convictions in the Face of New Facts

We live in an age of unprecedented access to information, where new evidence and diverse perspectives are merely a click away. Yet, it is a common and often frustrating human experience to encounter someone—or even ourselves—digging in their heels, rejecting compelling new information that contradicts a deeply held belief. This resistance to changing our minds is not simply a sign of stubbornness or ignorance; it is a complex psychological phenomenon rooted in identity, emotion, and the very architecture of our brains.

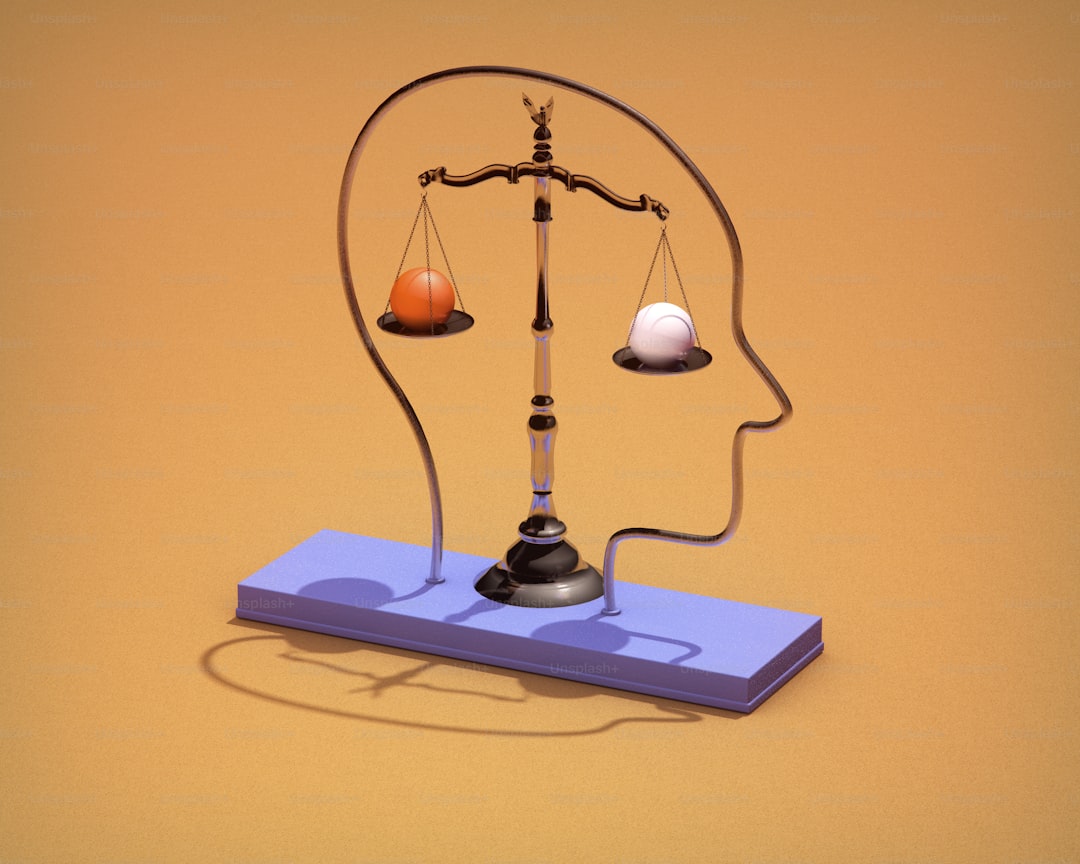

At the core of this resistance is a concept known as cognitive dissonance. Coined by psychologist Leon Festinger, it describes the profound mental discomfort we experience when we hold two conflicting beliefs, or when our actions contradict our beliefs. To resolve this aversive tension, our mind’s default setting is not to carefully evaluate the new evidence, but to reject, rationalize, or minimize it. Accepting that we were wrong is psychologically costly; it can feel like a personal failure. It is often less painful to dismiss the new data as flawed, biased, or part of a conspiracy than to dismantle a piece of our understanding of the world. This protective mechanism shields our ego but at the expense of intellectual growth.

Furthermore, our beliefs are seldom isolated pieces of data; they are woven into the fabric of our identity and social belonging. Our views on politics, religion, science, and even lifestyle choices become markers of who we are and to which tribes we belong. Changing a core belief can feel like an act of betrayal—to our past self, to our family, or to our community. The potential social cost of ostracism or ridicule can far outweigh the intellectual benefit of being correct. In this sense, clinging to a belief is an act of social survival. We are motivated to seek out information that confirms our existing views, a tendency called confirmation bias, and to surround ourselves with people who reinforce them, creating echo chambers that make contrary evidence seem alien and untrustworthy.

This process is also deeply emotional. Beliefs are often formed and held with strong feelings—passion, hope, fear, or moral conviction. When presented with cold, hard facts that challenge a belief tied to these emotions, the brain’s amygdala, a center for emotional processing, can effectively hijack the rational prefrontal cortex. We do not calmly assess; we feel threatened and react defensively. This is why debates often devolve into personal attacks: the challenge is felt not as an intellectual exchange but as an assault on one’s values or safety. The stronger the emotional investment, the higher the fortress walls.

Finally, our brains are fundamentally predictive organs designed for efficiency, not truth. We construct mental models of how the world works to navigate life without being paralyzed by constant analysis. Once these models are established, they operate automatically. Integrating disruptive new evidence requires conscious, effortful cognitive work—it is mentally taxing. The brain prefers the path of least resistance, favoring the familiar model that has, until now, seemed to work. This inertia is compounded by the backfire effect, where presenting corrective evidence can actually strengthen a person’s commitment to their original misconception, as they are motivated to defend it more vigorously.

Understanding why people resist changing their minds is crucial for fostering more productive dialogue in a polarized world. It reveals that simply presenting more facts is rarely sufficient. Effective communication requires empathy, an acknowledgment of the emotional and identity-based underpinnings of belief, and the creation of safe psychological spaces where changing one’s mind is seen not as a weakness, but as a strength. It reminds us that to navigate the complex landscape of human belief, we must speak not only to the rational mind but also to the social and emotional heart that sustains it.